The paper A Neural Algorithm of Artistic Style detailed on how to extract two sets of features from a given image: the content, and and the style.

In convolutional neural networks, each layer stores information in an abstraction based on the previous layer. For example, the first layer may search for dark pixels in a line to represent an edge. The next layer may then look for two perpendicular edges to represent a corner. The last layer can then return a classification based on which of the features are present and how they are arranged.

If we pass an image through a convolutional network and record the activations of each layer, or what information was passed through, we can retrieve a general structure of the contents of an image.

This information changes based on which layer is used. Information from the first layer would contain what specific pixels were present, while higher layers might show where edges are present, but not what pattern of pixels are considered edges.

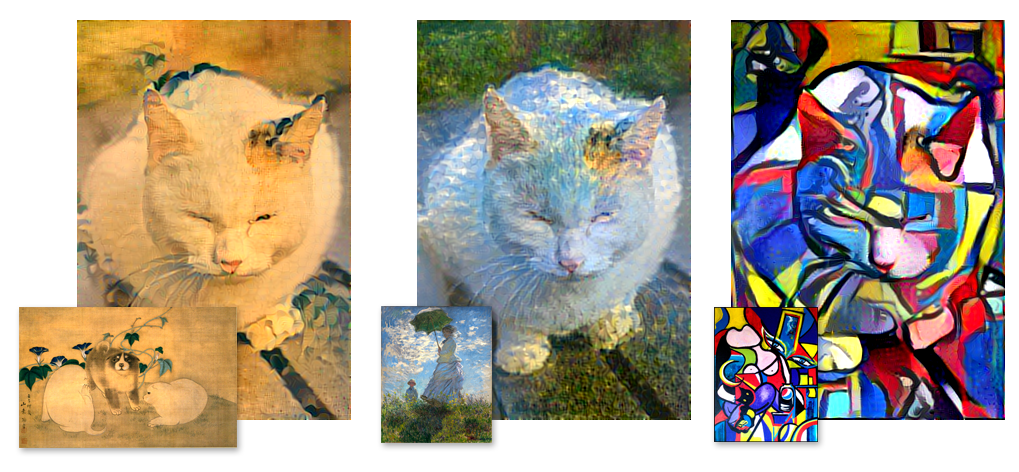

These images would have different first layer activations, but very similar 4th layer activations in VGG.

In short, different images can still contain the same high-level features while having completely different pixel makeup. Artistic style is simply how higher-level features such as shapes are represented on the pixel level.

To create an blended image, we need to perform gradient descent on a cost function with two components: the content of the image and the style. Content matching can be calculated by optimizing the difference in activations between the generated image and the base image in the higher layers of the network.

On the other hand, we can't just optimize for lower-level activations to match style, as the information would contain the content of the style image. Instead, we take the correlations of features, which captures low-level representations but not the overall arrangement.

Recreating an image in the style of another would follow the following basic steps:

- Preprocess both images, accounting for the mean and standard deviation.

- Select convolutional layers for representing style. These are generally spread out throughout the network.

- Select one layer to represent content, usually one of the middle to higher layers.

- Forward pass the base image and record the content layer activations.

- Forward pass the style image and record the style layer activations.

- Perform gradient descent on a cost function consisting of both content and style differences.

For more details, I've created a simplified version of kaishengtai's neuralart over on my github.

Image from http://www.shun.bz/20150914/1039995965.html